Lecture 31: Optimistic Linked Lists

COSC 273: Parallel and Distributed Computing

Spring 2023

Annoucements

- Leaderboard submission results tomorrow

- Next leaderboard submission is Friday? Monday?

- Take-home quiz: released Wednesday (Gradescope), due Friday

- concurrent linked lists

Today

Concurrent Linked Lists, Three Ways:

- Coarse locking

- Fine-grained locking

- Optimistic locking

A Generic Task

Store, access, & modify collection of distinct elements:

The Set ADT:

-

addan element- no effect if element already there

-

removean element- no effect if not present

- check if set

containsan element

Java SimpleSet Interface

public interface SimpleSet<T> {

// Add an element to the SimpleSet. Returns true if the element

// was not already in the set.

boolean add(T x);

// Remove an element from the SimpleSet. Returns true if the

// element was previously in the set.

boolean remove(T x);

// Test if a given element is contained in the set.

boolean contains(T x);

}

Linked List SimpleSets

- Each

Nodestores:- reference to the stored object

- reference to the next

Node - a numerical key associated with the object

- The list stores

- reference to

headnode - a

tailnode -

headandtailhave min and max key values - nodes have strictly increasing keys

- reference to

Why Keys?

Question. Why is it helpful to store keys in increasing order?

Our Goals

- Correctness, safety, liveness

- deadlock-freedom

- starvation-freedom?

- nonblocking??

- linearizability???

- Performance

- parallelism?

Synchronization Philosophies

- Coarse-Grained (

CoarseList.java)- lock whole data structure for every operation

- Fine-Grained (

FineList.java)- only lock what is needed to avoid disaster

- Optimistic (

OptimisticList.java)- don’t lock anything to read, only lock to modify

- Lazy (

LazyList.java)- use “logical” removal, only lock occasionally

- Nonblocking (

NonblockingList.java)- use atomics, not locks!

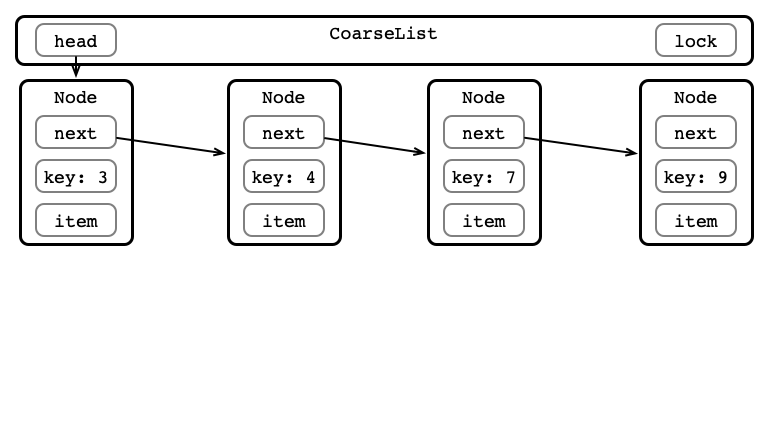

Coarse-grained Locking

One lock for whole data structure

For any operation:

- Lock entire list

- Perform operation

- Unlock list

See CoarseList.java

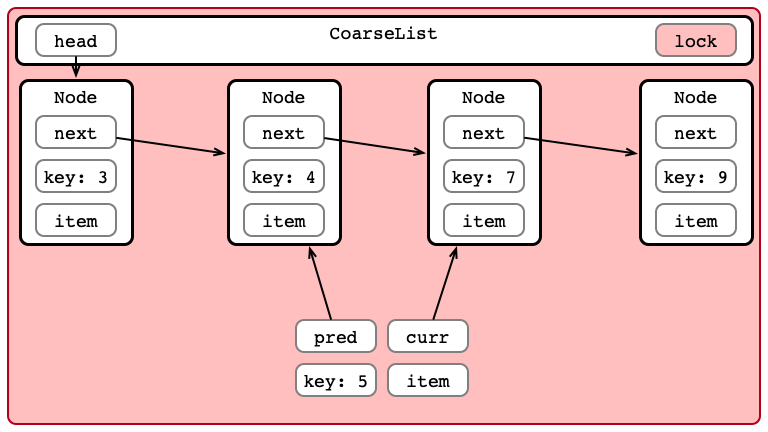

Coarse-grained Insertion

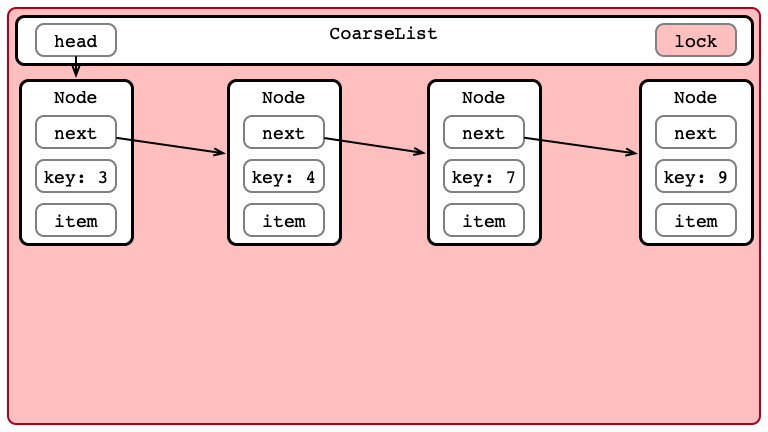

Step 1: Acquire Lock

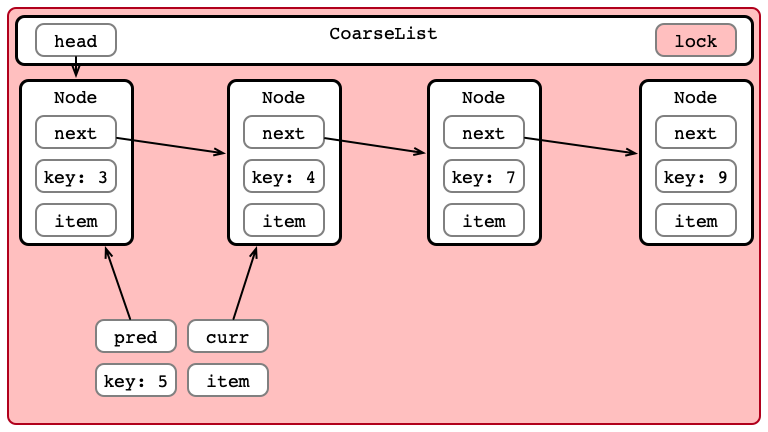

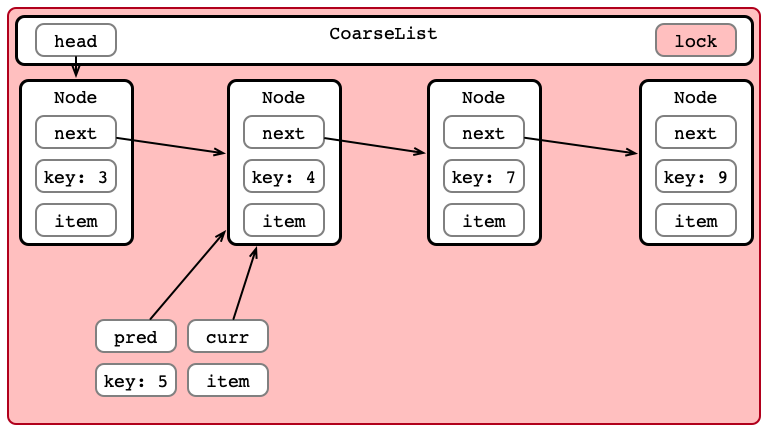

Step 2: Iterate to Find Location

Step 2: Iterate to Find Location

Step 2: Iterate to Find Location

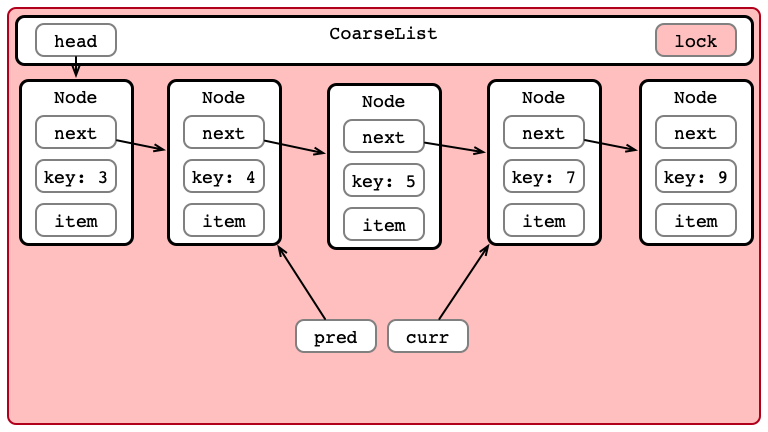

Step 3: Insert Item

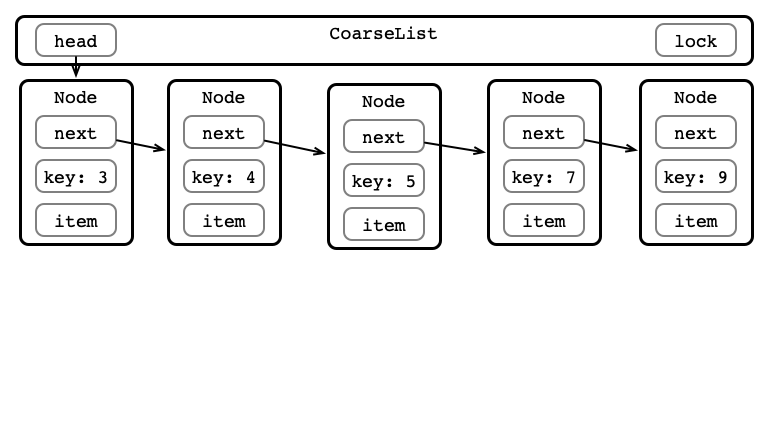

Step 4: Unlock List

Coarse-grained Appraisal

Advantages:

- Easy to reason about

- Easy to implement

Disadvantages:

- No parallelism

- All operations are blocking

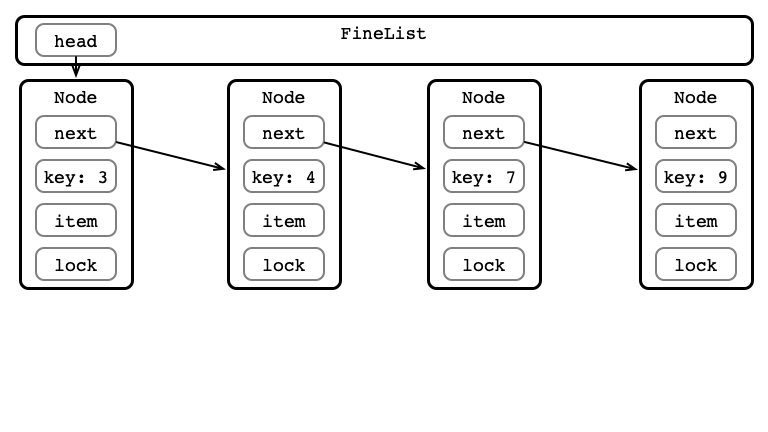

Fine-grained Locking

One lock per node

For any operation:

- Lock head and its next

- Hand-over-hand locking while searching

- always hold at least one lock

- Perform operation

- Release locks

See FineList.java

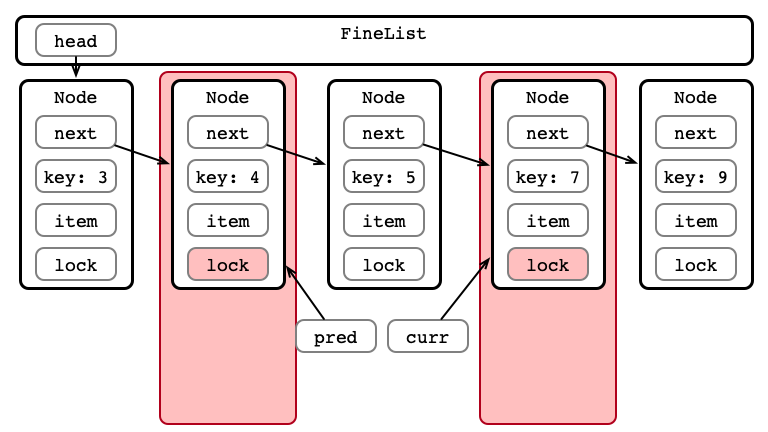

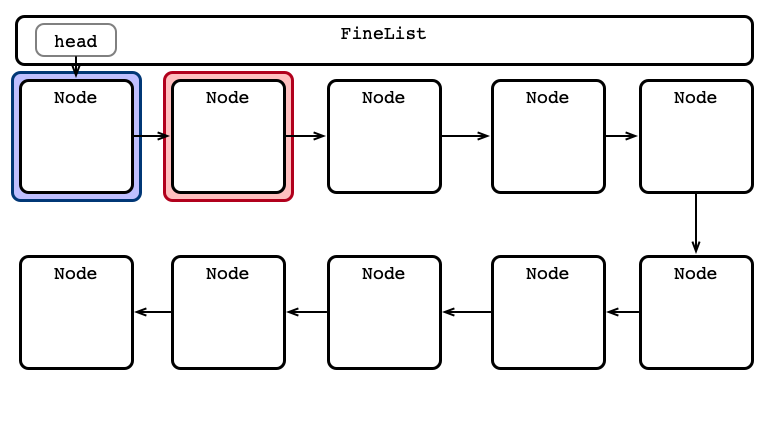

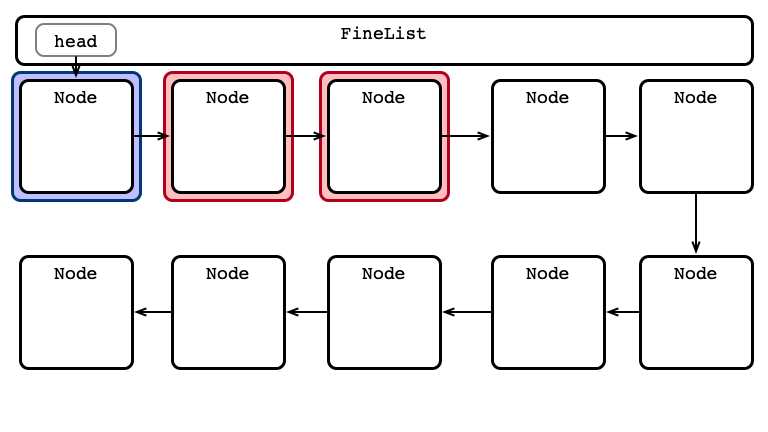

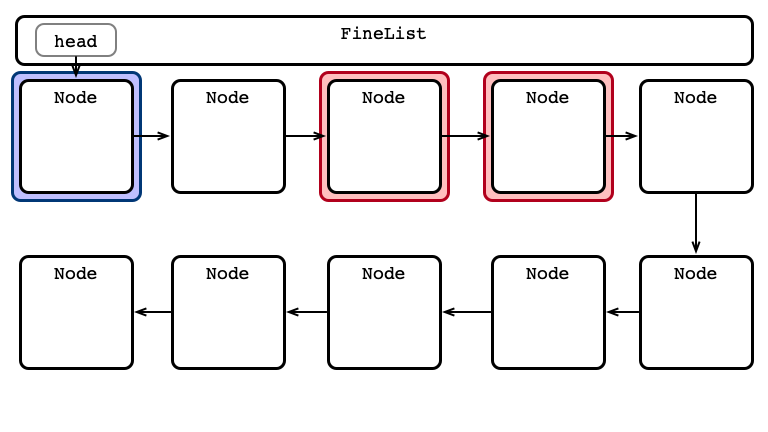

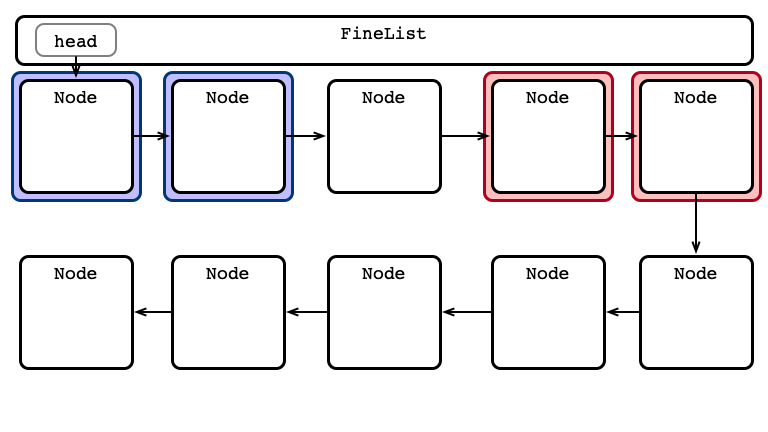

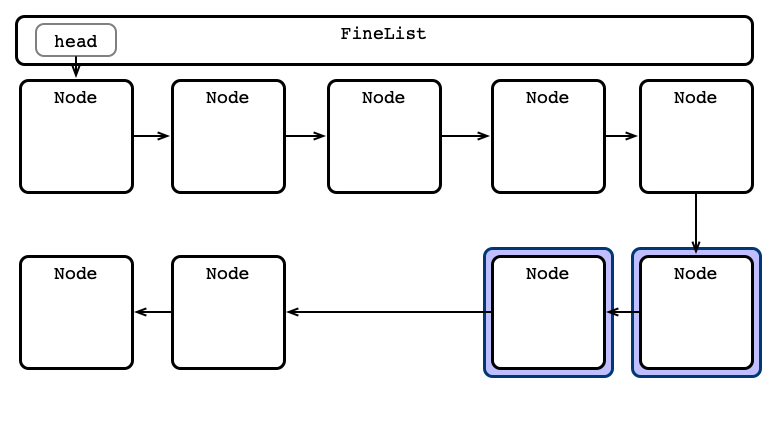

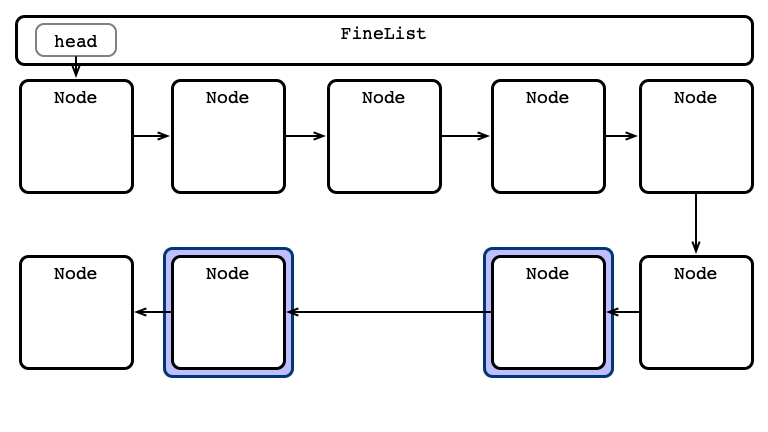

A Fine-grained Insertion

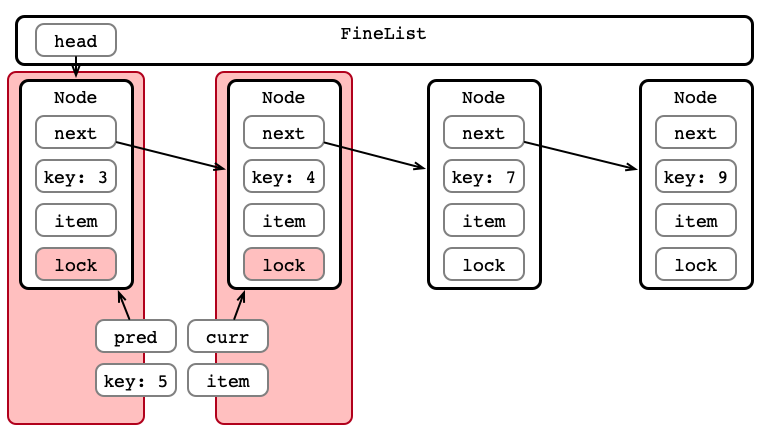

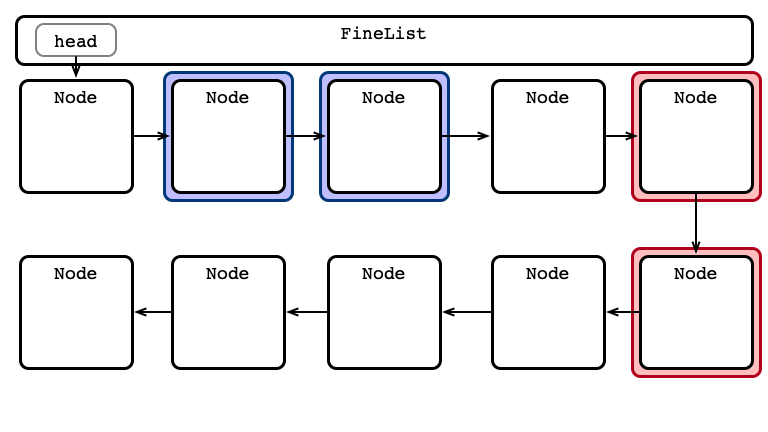

Step 1: Lock Initial Nodes

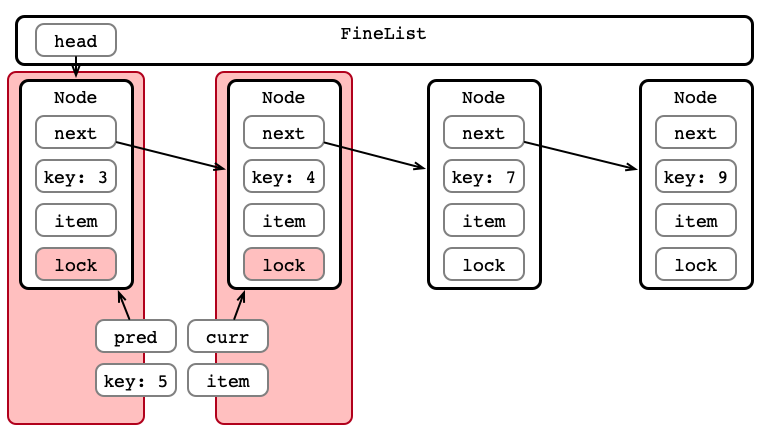

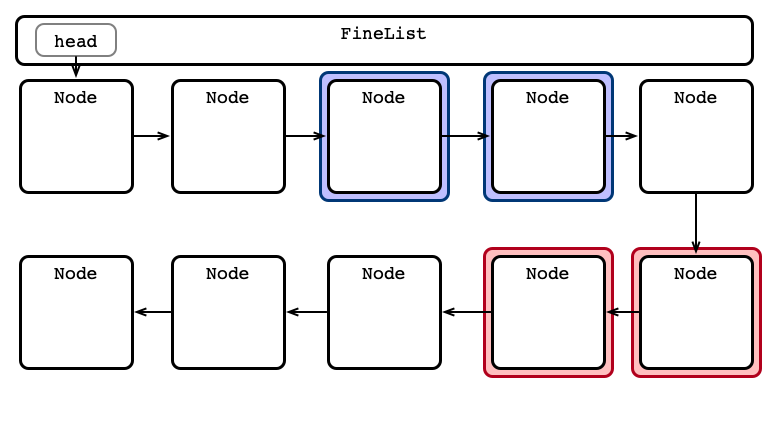

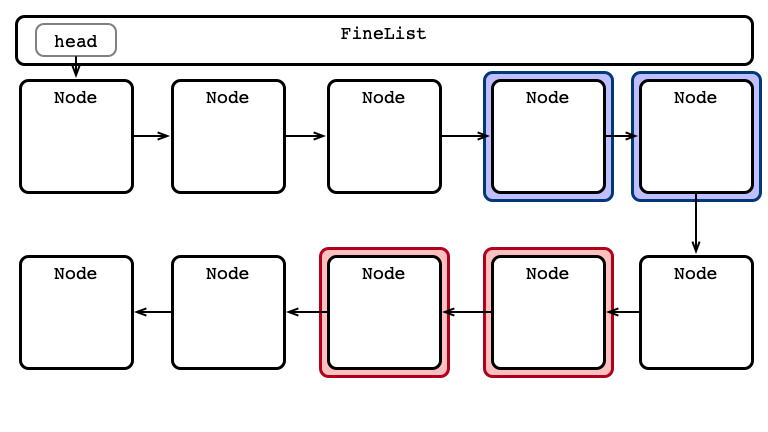

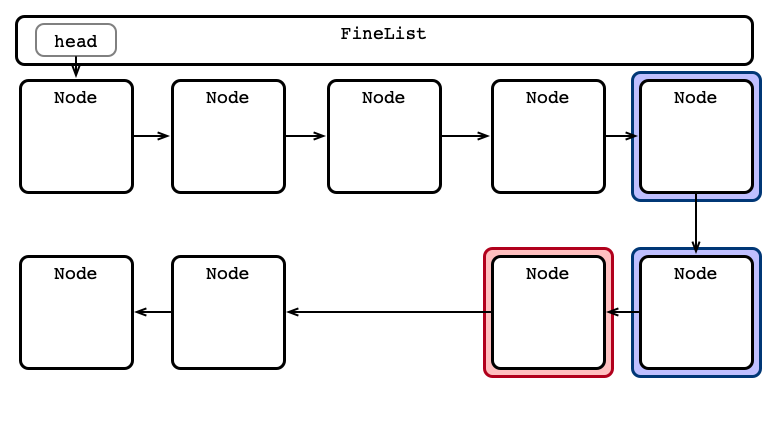

Step 2: Hand-over-hand Locking

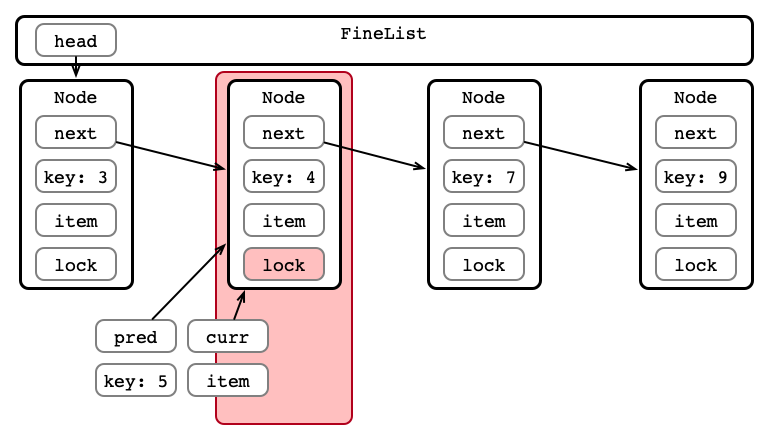

Step 2: Hand-over-hand Locking

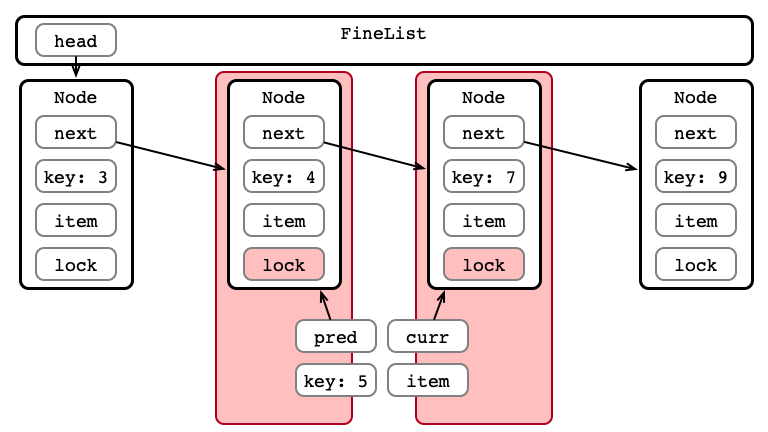

Step 2: Hand-over-hand Locking

Step 3: Perform Insertion

Step 4: Unlock Nodes

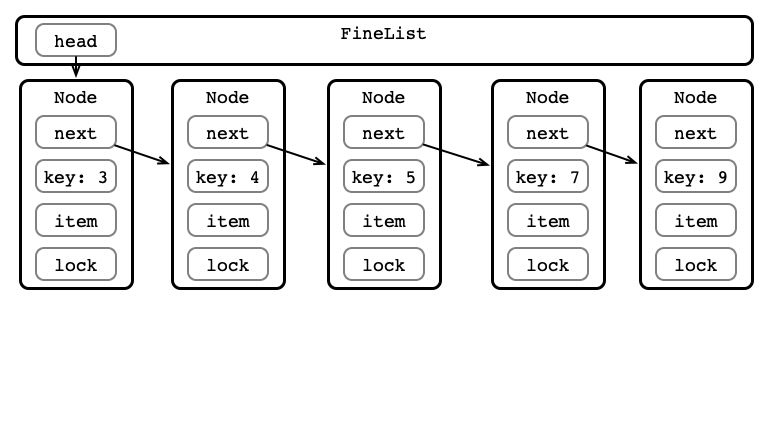

An Advantage: Parallel Access

An Advantage: Parallel Access

An Advantage: Parallel Access

An Advantage: Parallel Access

An Advantage: Parallel Access

An Advantage: Parallel Access

An Advantage: Parallel Access

An Advantage: Parallel Access

An Advantage: Parallel Access

An Advantage: Parallel Access

An Advantage: Parallel Access

An Advantage: Parallel Access

Fine-grained Appraisal

Advantages:

- Parallel access

- Reasonably simple implementation

Disadvantages:

- More locking overhead

- can be much slower than coarse-grained

- All operations are blocking

Optimistic Synchronization

Fine-grained wastes resources locking

- Nodes are locked when traversed

- Locked even if not modified!

A better procedure?

- Traverse without locking

- Lock relevant nodes

- Perform operation

- Unlock nodes

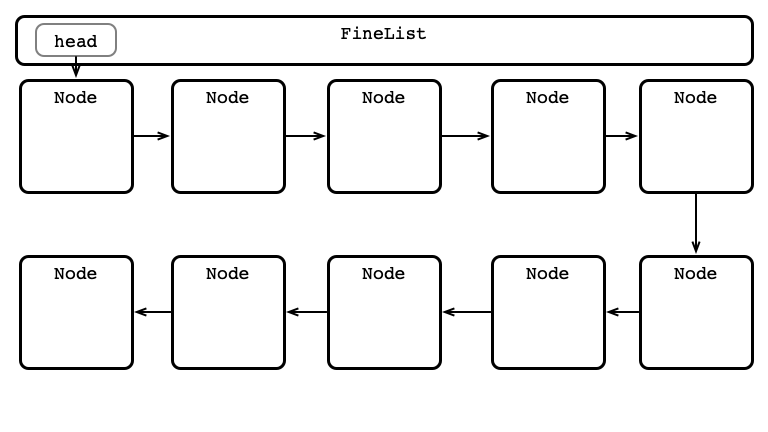

A Better Way?

A Better Way?

A Better Way?

A Better Way?

A Better Way?

A Better Way?

What Could Go Wrong?

An Issue!

Between traversing and locking

- Another thread modifies the list

- Now locked nodes aren’t the right nodes!

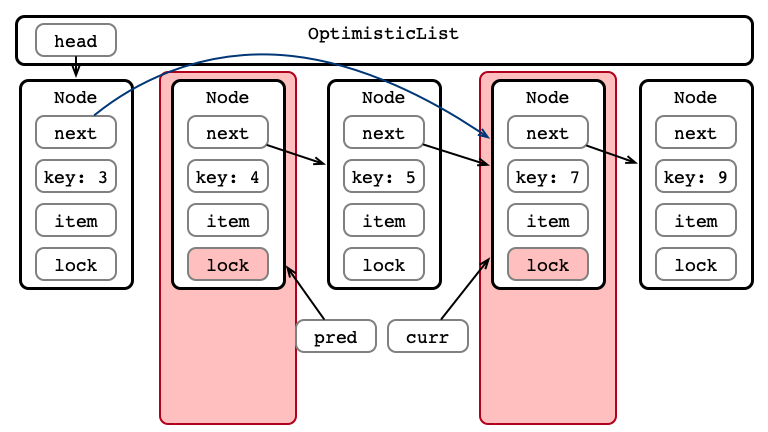

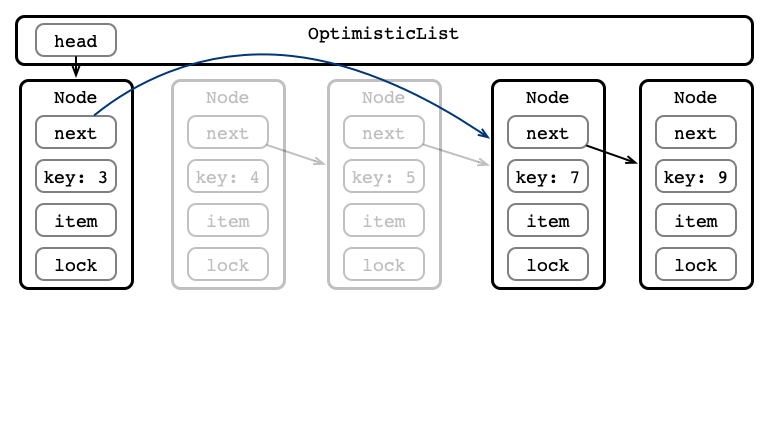

An Issue, Illustrated

An Issue, Illustrated

An Issue, Illustrated

An Issue, Illustrated

An Issue, Illustrated

An Issue, Illustrated

How can we Address this Issue?

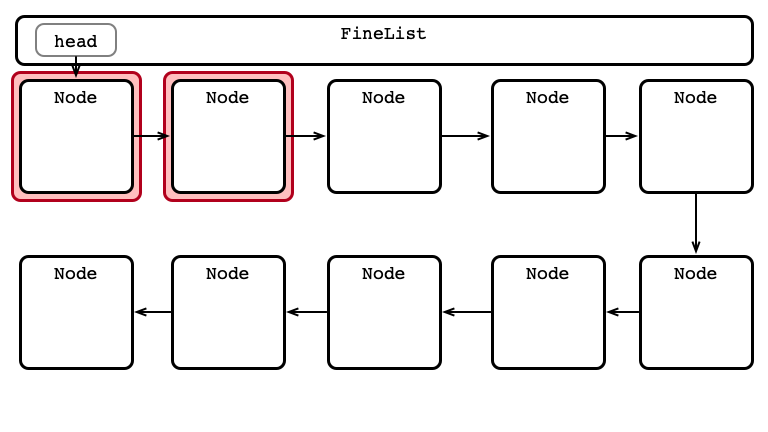

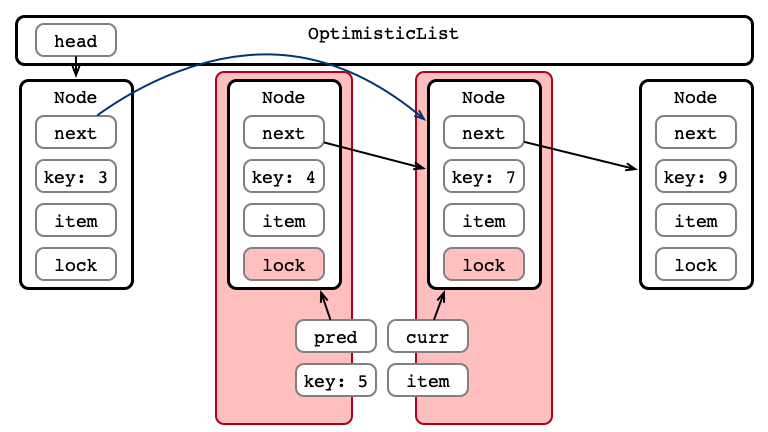

Optimistic Synchronization, Validated

- Traverse without locking

- Lock relevant nodes

-

Validate list

- if validation fails, go back to Step 1

- Perform operation

- Unlock nodes

Seet OptimisticList.java

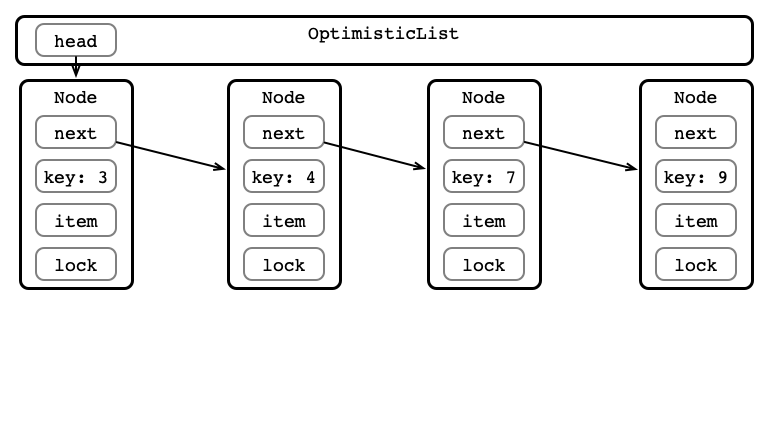

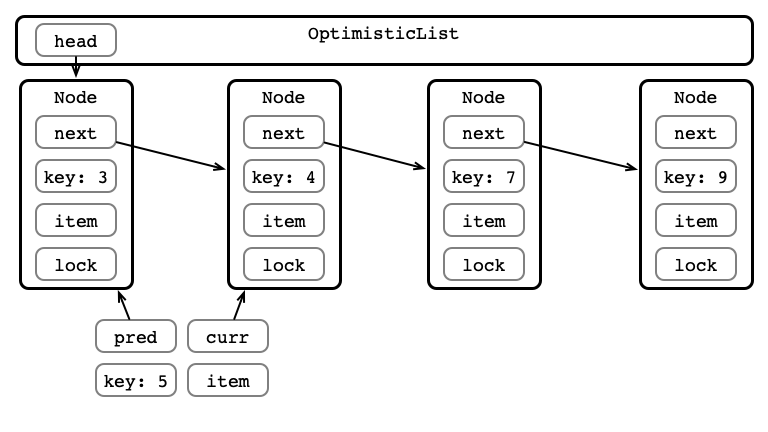

How do we Validate?

After locking, ensure that:

-

predis reachable fromhead -

currispred’s successor

If these conditions aren’t met:

- Start over!

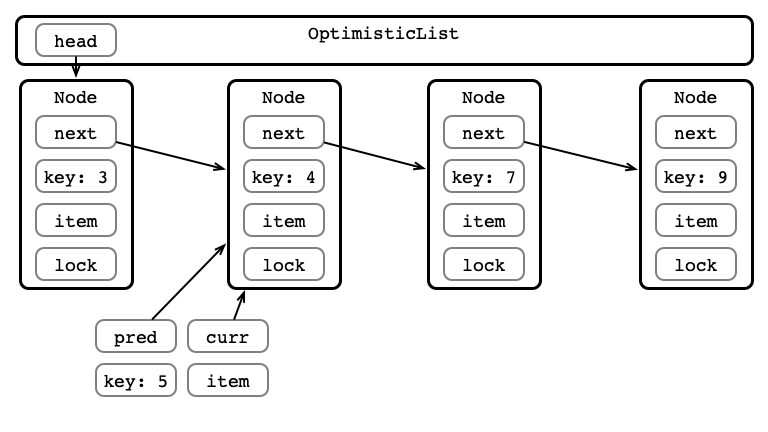

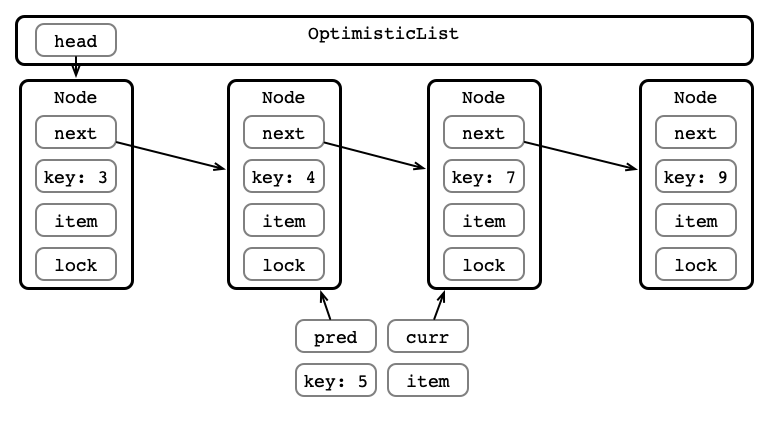

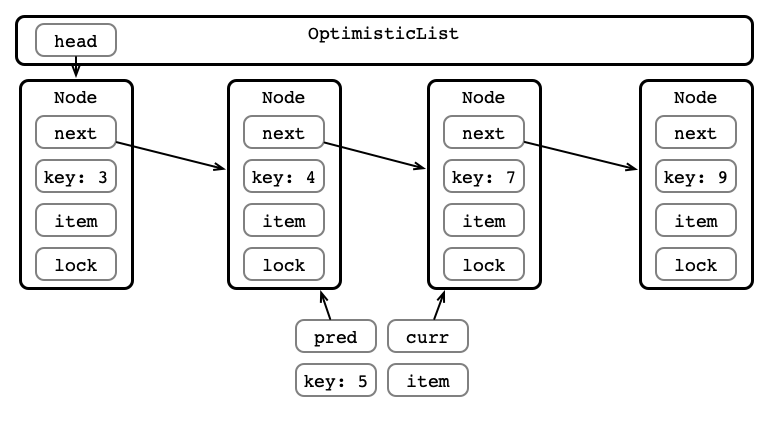

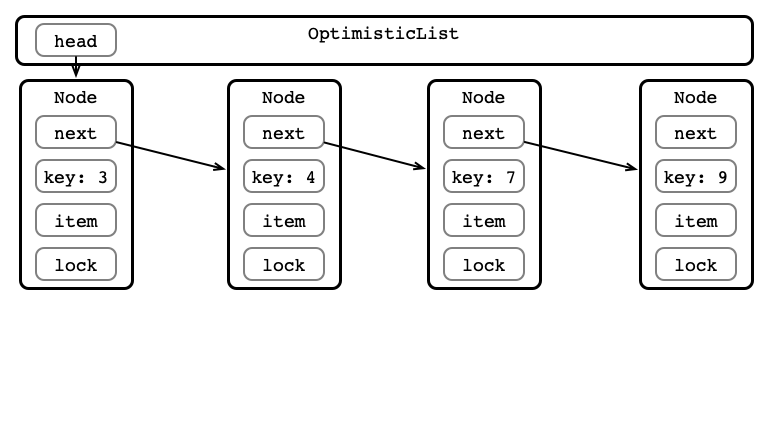

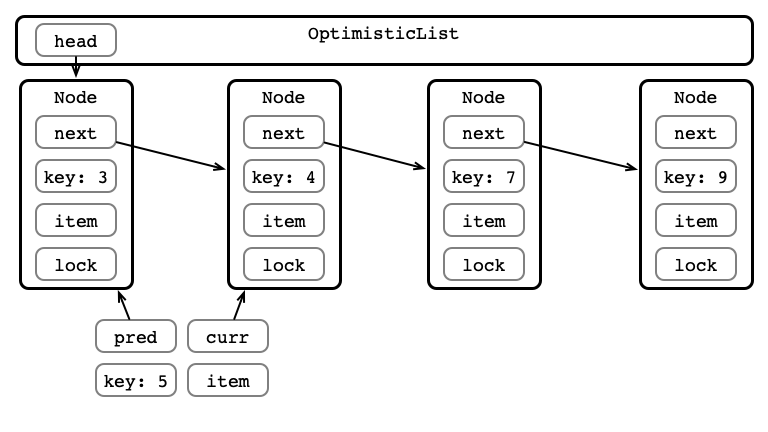

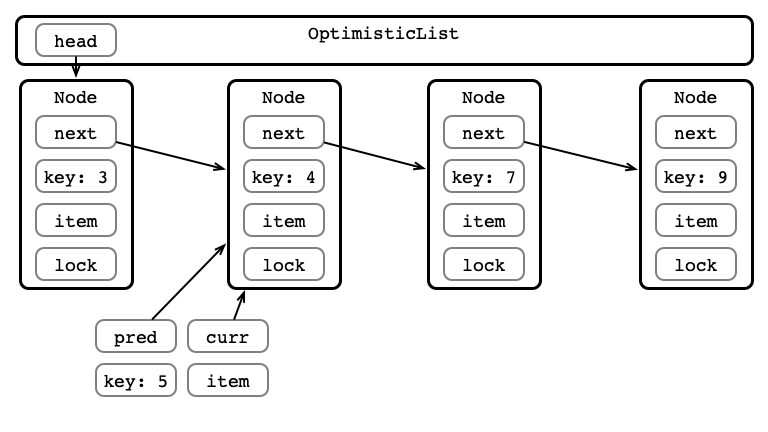

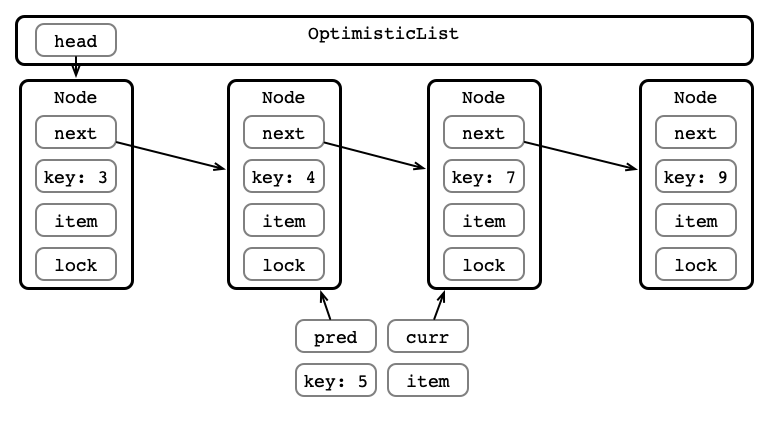

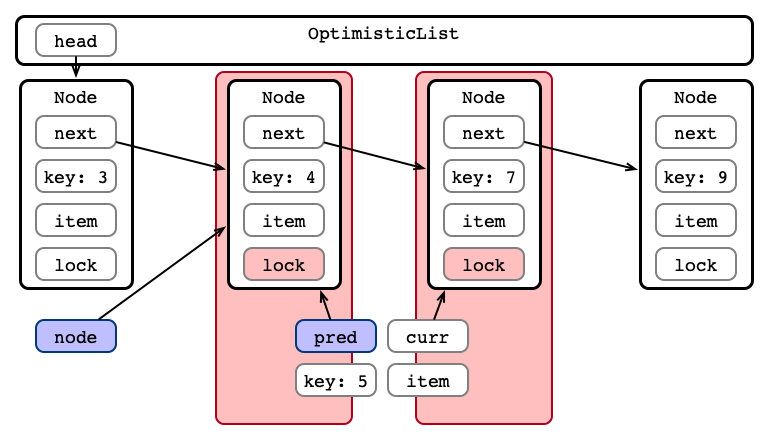

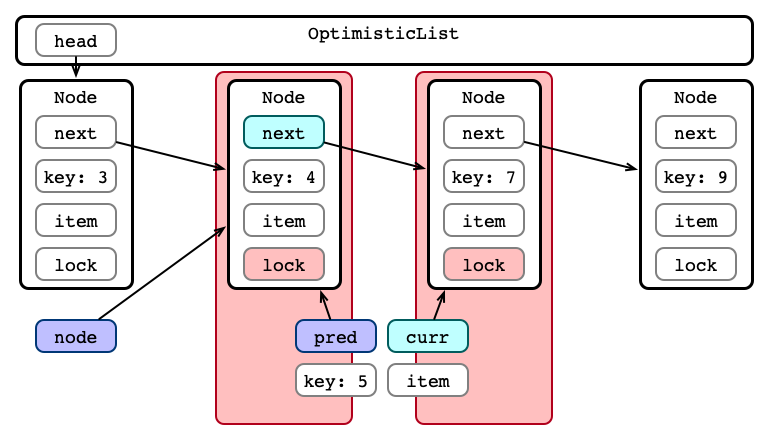

Optimistic Insertion

Step 1: Traverse the List

Step 1: Traverse the List

Step 1: Traverse the List

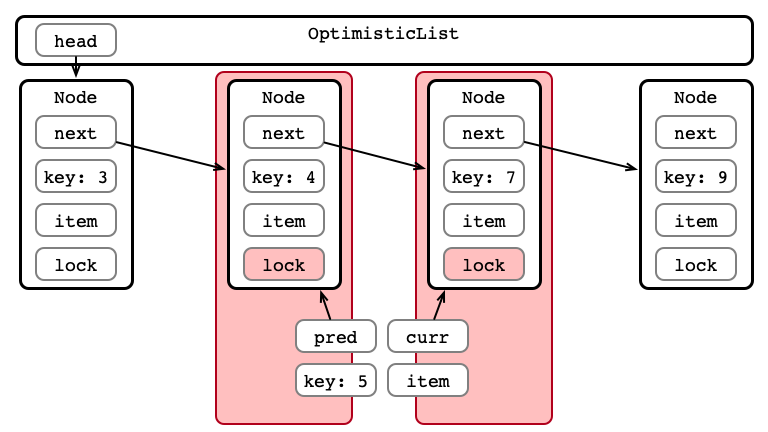

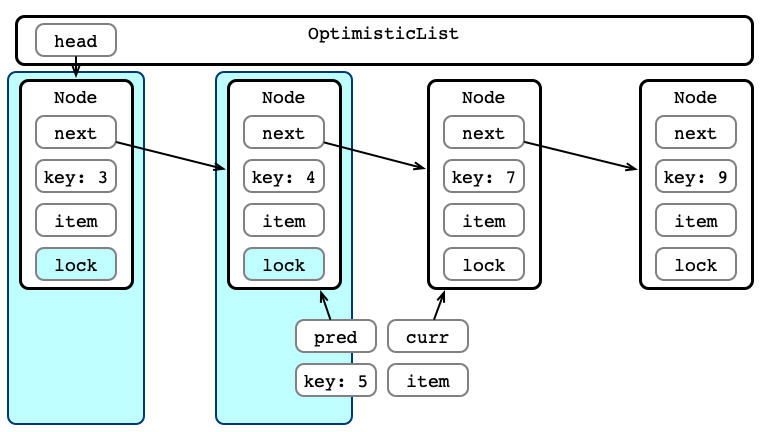

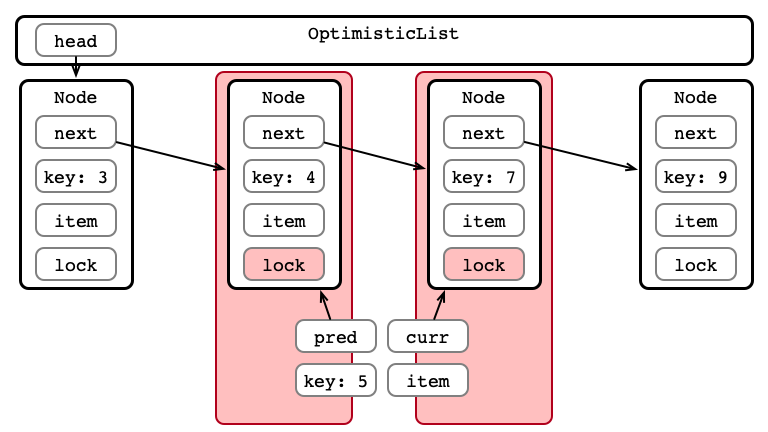

Step 2: Acquire Locks

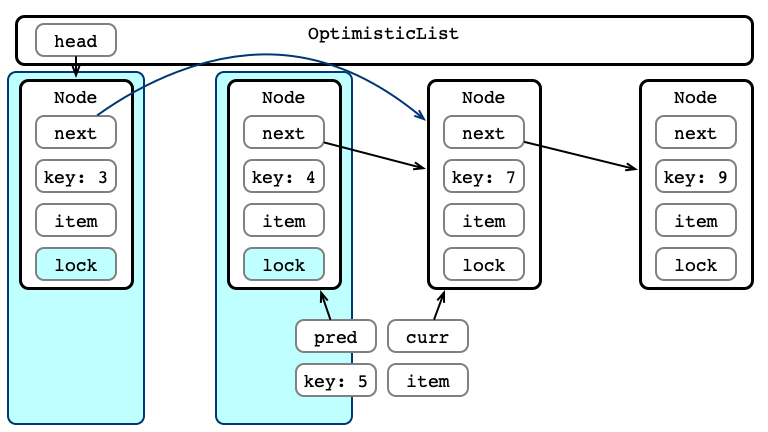

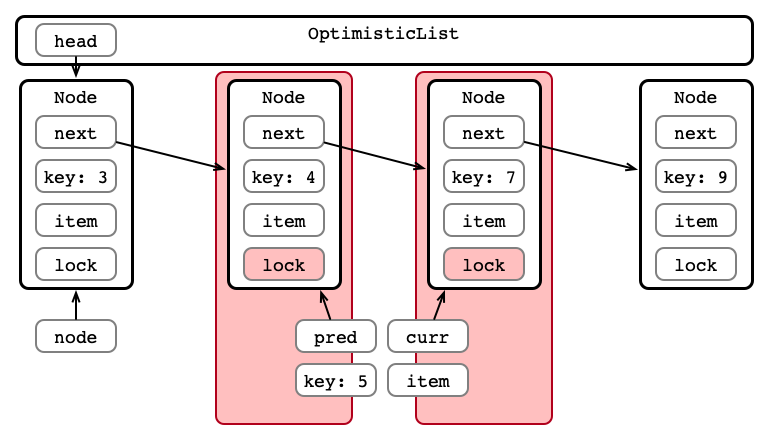

Step 3: Validate List - Traverse

Step 3: Validate List - pred Reachable?

Step 3: Validate List - Is curr next?

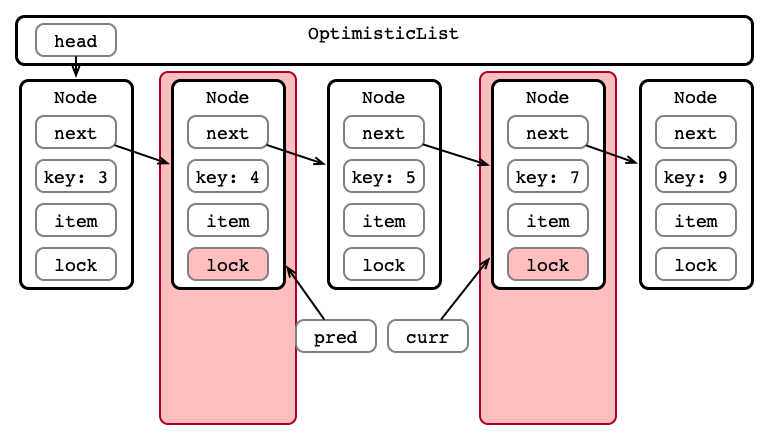

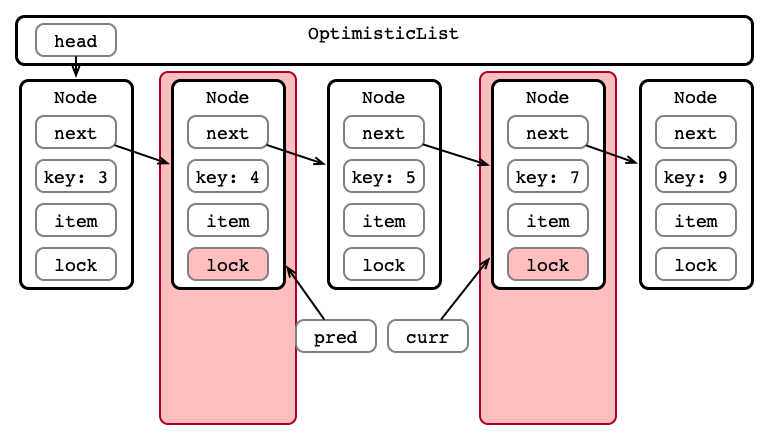

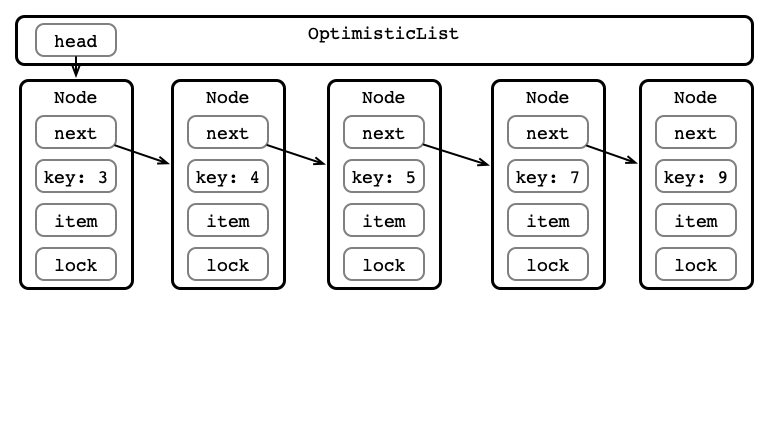

Step 4: Perform Insertion

Step 5: Release Locks

Implementing Validation

private boolean validate (Node pred, Node curr) {

Node node = head;

while (node.key <= pred.key) {

if (node == pred) {

return pred.next == curr;

}

node = node.next;

}

return false;

}

Optimistic Appraisal

Advantages:

- Less locking than fine-grained

- More opportunities for parallelism than coarse-grained

Disadvantages:

- Validation could fail

- Not starvation-free

- even if locks are starvation-free

Performance Tests

On HPC Cluster:

- Compare running times of performing 1M operations

-

add/remove/containssequence chosen at random - elements chosen from

1toNat random-

Nis universe size

-

-

- Parameters

- universe size $\approx$ set size

- number of threads

See SetTester.java

Performance Predictions?

Under what conditions do you expect coarse/fine/optimistic strategies to be performant?

- Number of threads (1 to 128 on HPC)

- Set universe size (8 to 8,192)

Performance v. Size, 1 Thread

Performance v. Size, 128 Threads

Time v. Threads, 8 Elements

Time v. Threads, 8,192 Elements

Coarse Time v. Threads

Fine Time v. Threads

Optimistic Time v. Threads

Next Time

Another Way: Lazy Synchronization

- don’t modify the list unless you really have to