Lecture 16: Concurrent Linked Lists

Overview

- Lab 04 Tips

-

Setas a Linked List - Synchronization Philosophies

- Coarse-grained Linked List

- Fine-grained Linked List

- Optimistic Linked List

Lab 04 Tips

Prime Tasks

Suggested structure:

- Compute small primes (up to

Math.sqrt(MAX))- use

Primes.getPrimesUpTo(int max)

- use

- Use small primes and SoE to find rest of primes

- consider a block

[n, n + k]of numbers fromnton + k - for each small prime

p, remove multiples ofpfrom[n, n + k] - remaining numbers are prime

- consider a block

- Write primes from step 2 in order to

primesarray

Have a dedicated thread for step 3; use several threads to perform step 2 in parallel

Prime Tasks in Pictures

Performance Optimizations

- Use reasonably small objects

- several smaller tasks/arrays are better than few larger ones

- arrays should fit in low-level cache

- Minimize sharing of objects

- each object should be written by one thread, and read by at most one other thread

- think of “passing” completed tasks from producer to consumer

- limit concurrent access

A Set

A Set of elements:

- store a collection of distinct elements

-

addan element- no effect if element already there

-

removean element- no effect if not present

- check if set

containsan element

An Interface

public interface SimpleSet<T> {

/*

* Add an element to the SimpleSet. Returns true if the element

* was not already in the set.

*/

boolean add(T x);

/*

* Remove an element from the SimpleSet. Returns true if the

* element was previously in the set.

*/

boolean remove(T x);

/*

* Test if a given element is contained in the set.

*/

boolean contains(T x);

}

Implementing SimpleSet

Store elements in a linked list of nodes

- Each

Nodestores:- reference to the stored object

- reference to the next

Node - a key associated with the object

- use hash code of object

- keys can be sorted

- The list stores

- reference to

headnode - a

tailnode -

headandtailhave min and max key values

- reference to

Our Goals

- Correctness, safety, liveness

- linearizability

- deadlock-freedom

- starvation-freedom?

- nonblocking???

- Performance

- parallelism?

Synchronization Philosophies

Synchronization Philosophies

- Coarse-Grained

- lock whole data structure for every operation

- Fine-Grained

- only lock what is needed to avoid disaster

- Optimisitc

- don’t lock anything to read

- lock to modify

- Lazy

- use “logical” removal

- only use locks occasionally

- Nonblocking

- use atomics, not locks!

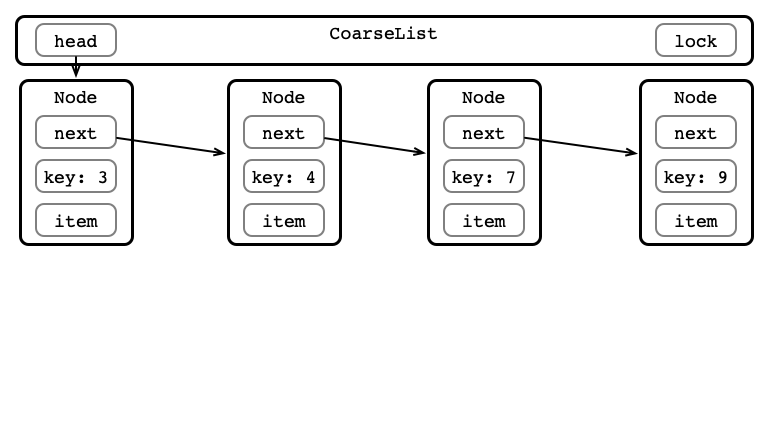

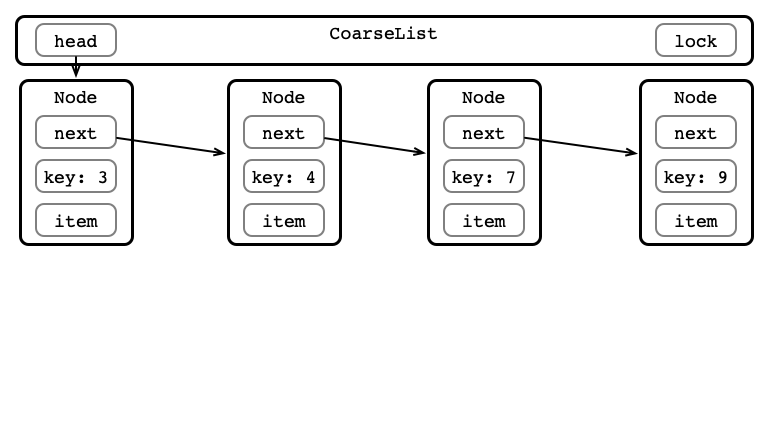

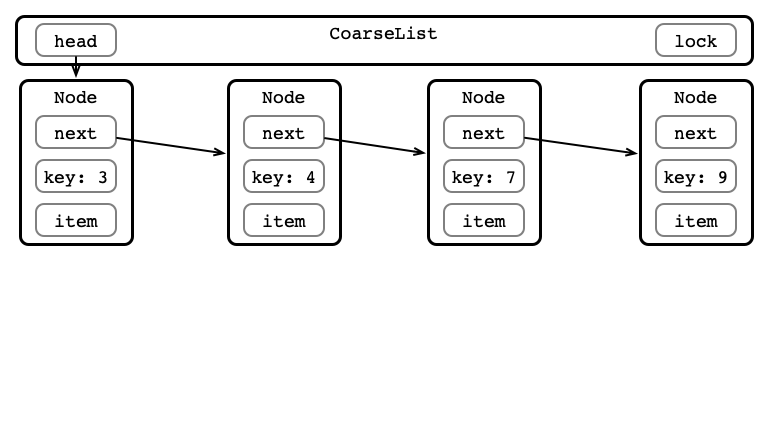

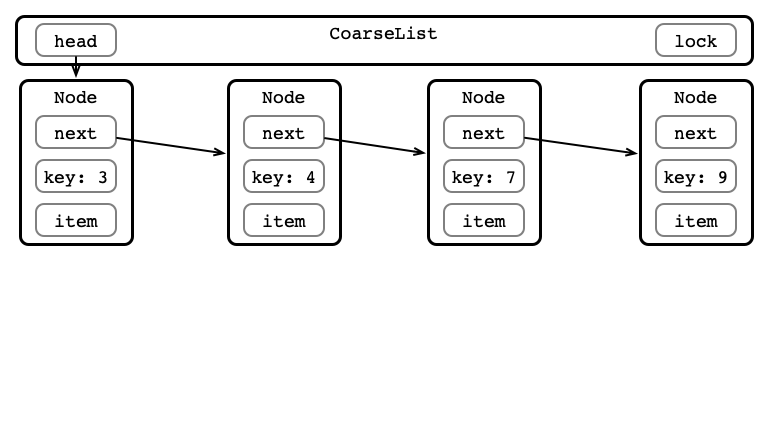

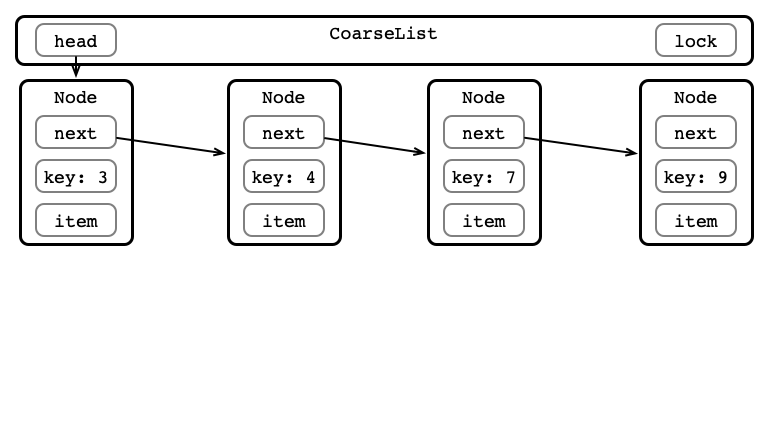

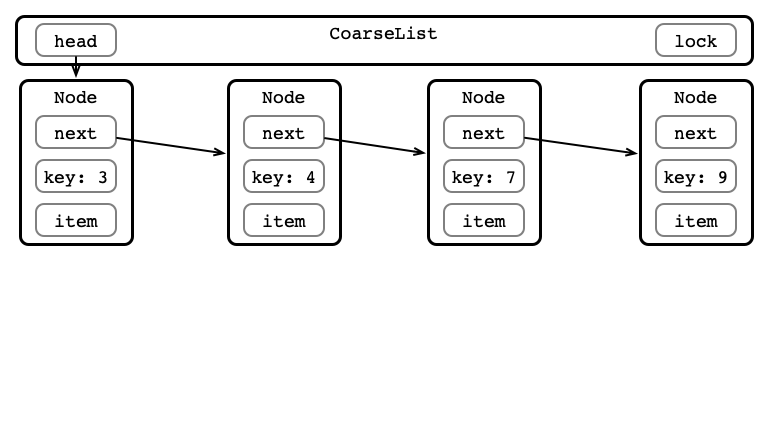

Coarse-grained Linked List

Coarse-grained Linked List

One lock for whole data structure

For any operation:

- Lock entire list

- Perform operation

- Unlock list

Coarse-grained List Figure

Add item with Key 5

Step 1: Lock List

Step 2: Find Correct Location

Step 3: Do Insertion

Step 4: Unlock List

In Code

- Look at implementation

- Test performance

- This is our baseline!

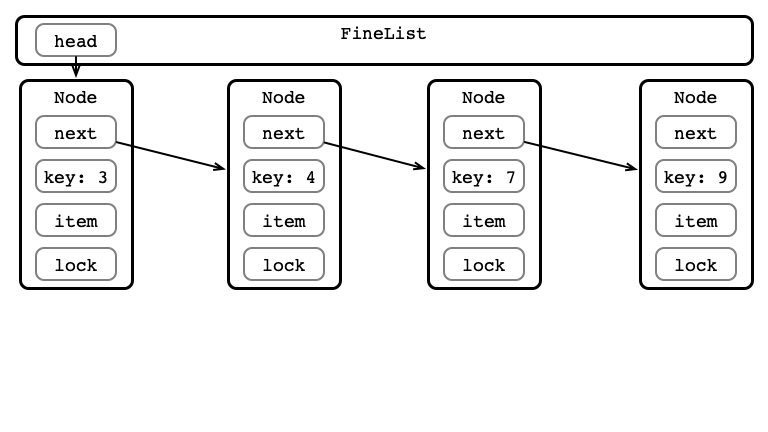

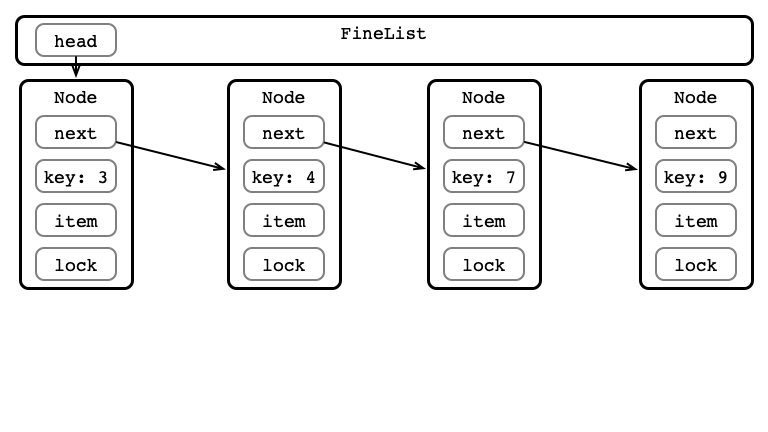

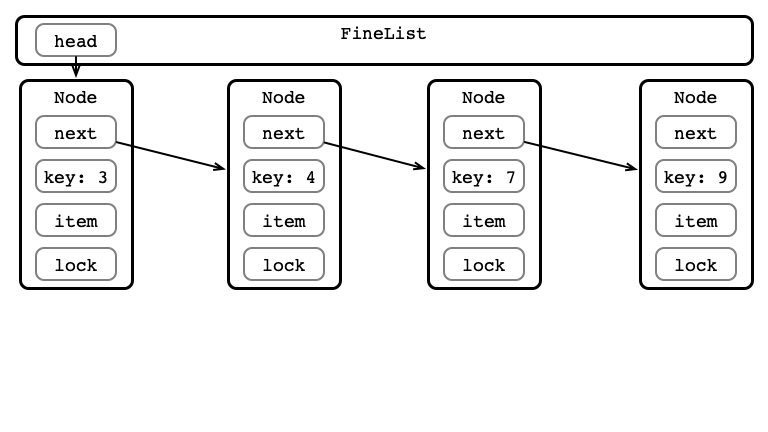

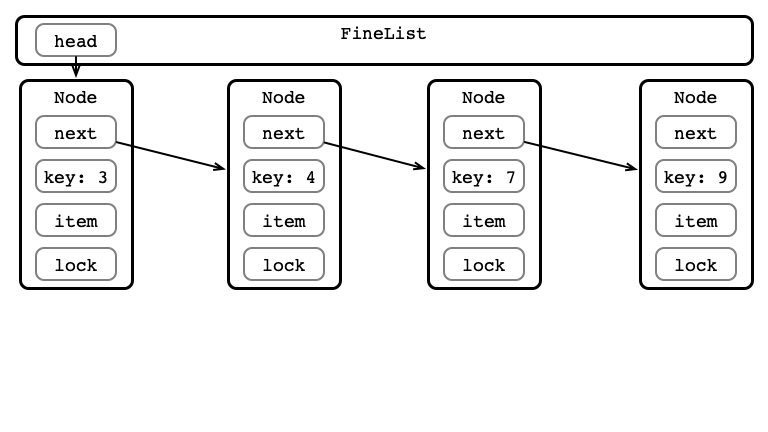

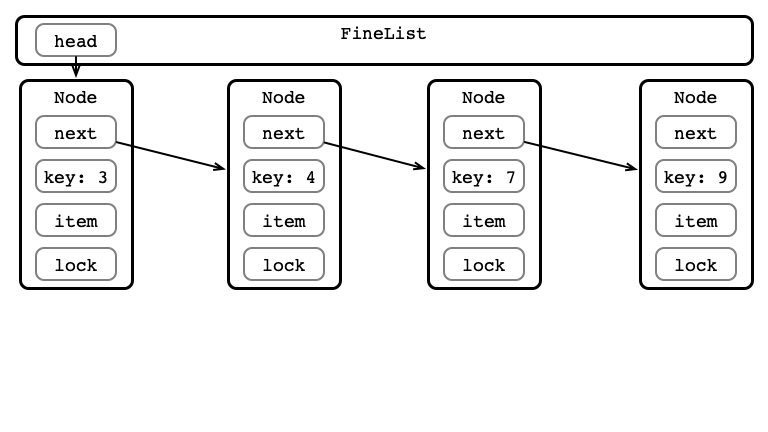

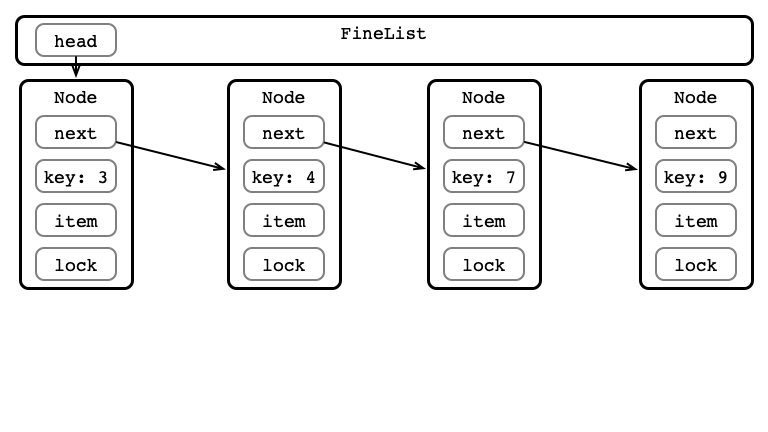

Fine-grained Linked List

Fine-grained Linked List

One lock per node

For any operation:

- Store

curr,pred(initialized to head)- lock

pred

- lock

- Hand-over-hand locking:

- lock

curr = pred.next - unlock

pred - set

pred = curr - repeat until correct location found

- lock

- Perform operation

- Release locks

Fine-grained List Figure

Add item with Key 5

Step 1: Set and Lock pred

Step 2: Hand over Hand Locking

Step 3: Perform Insert

Step 4: Release Locks

Test

- More efficient than coarse?

- Why or why not?

- What affects performance?

Optimistic Linked List

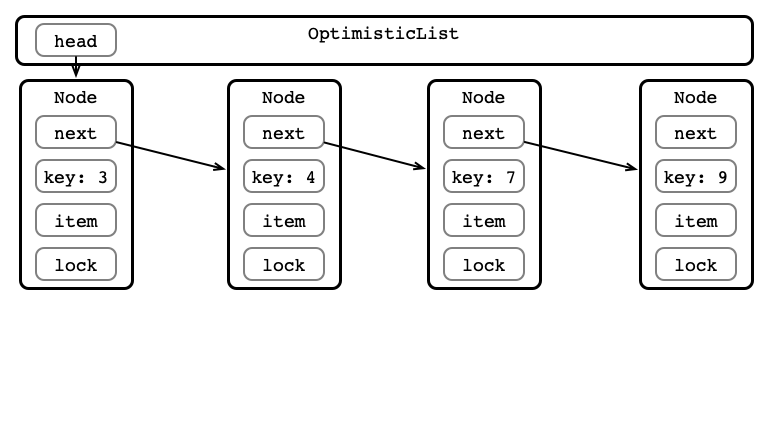

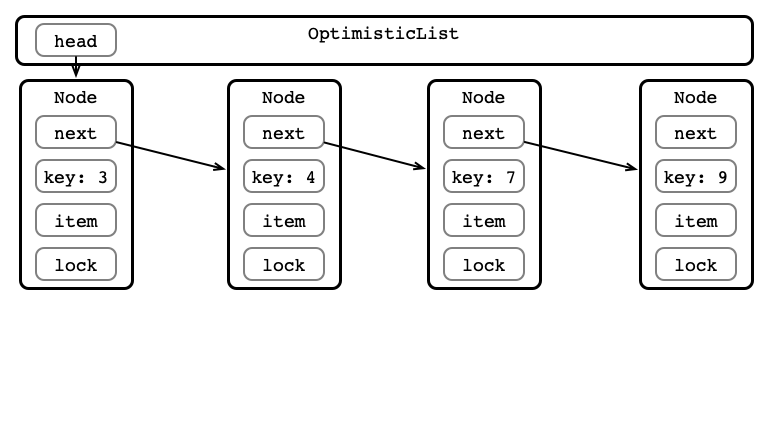

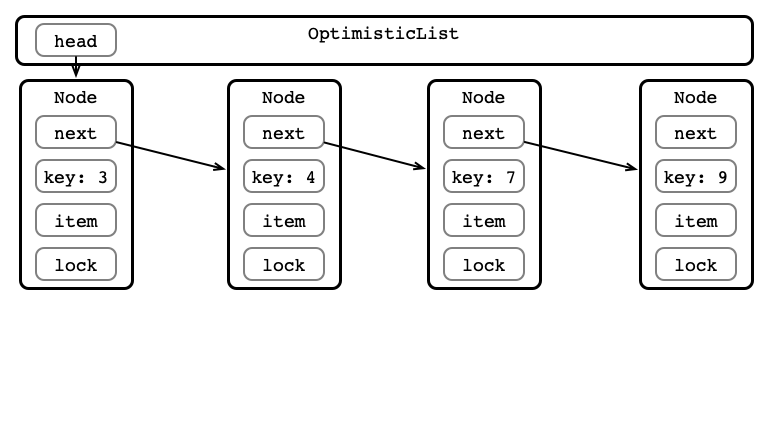

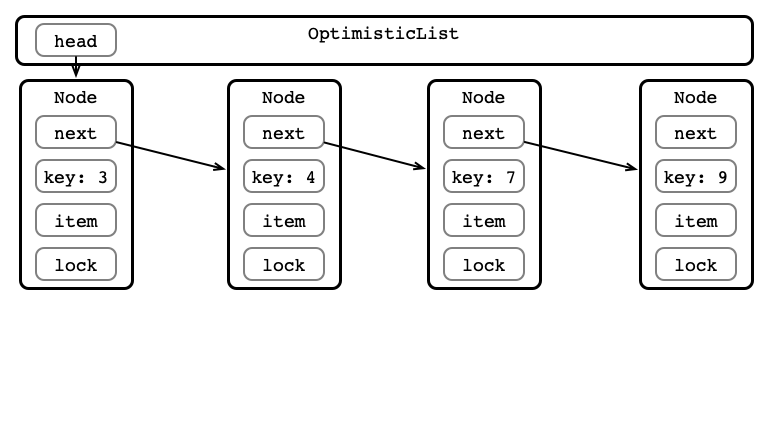

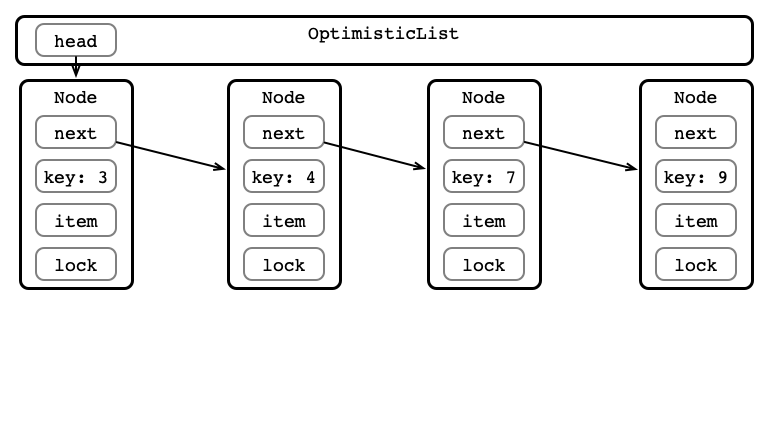

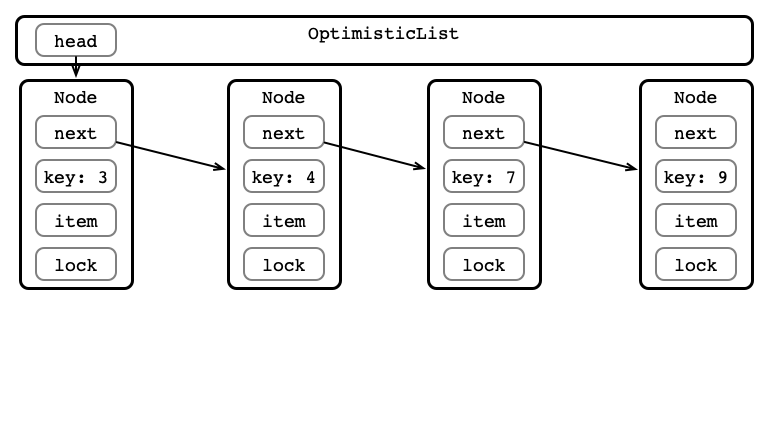

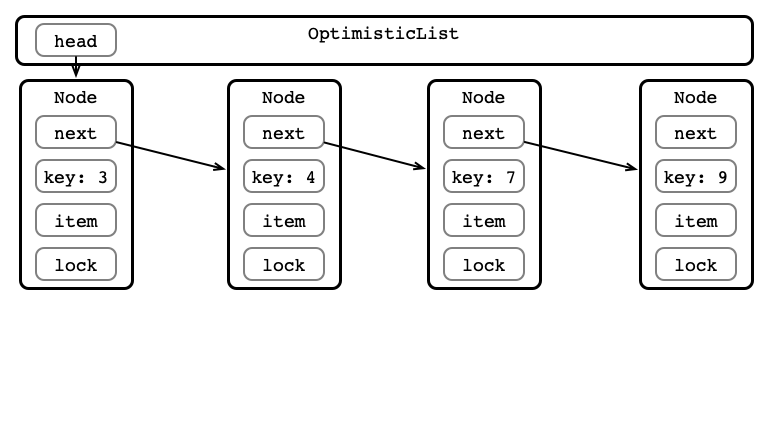

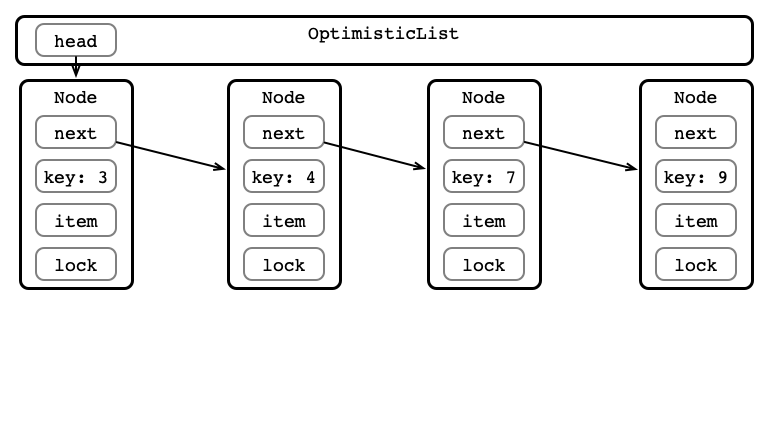

Optimistic Linked List

One lock per node

For any operation:

- Find location

- Acquire locks

- Validate location

- go back to 1 if this fails!

- Perform operation

- Release locks

Optimistic List Figure

Add item with Key 5

Step 1: Find Location

Step 2: Acquire Locks

Step 3: Validate

Step 4: Perform Operation

Step 5: Release Locks

Why Could Validation fail?

Questions

- Liveness: Deadlock-free? Starvation-free?

- Performance: When do you expect good performance? When not?

Next Time:

- Lazy Synchronized List

- Nonblocking Synchronized List

- Other Data Structures